OAuth 2.0 and Sign-In

[a huge THANK YOU to my friend Mike Jones for his invaluable feedback and advice about this long and complicated post]

If there’s a question that I dread receiving – and I receive it very often nonetheless, even from colleagues – is the following:

“Why can’t I provision in ACS OAuth 2.0 providers in the same way as I provision OpenID providers?”

Or its alternative, linearly-dependent formulation:

“Provider X supports OAuth 2.0; ACS supports OAuth 2.0. How can I connect the two?”

I dread it, because the question in itself is an indication that the asker uses “OAuth 2.0” in its conversational meaning, as opposed to referring to the actual specification and all that entails. For the non-initiated the term “OAuth” has come to be a catch-all term that expresses intentions and beliefs about what one “authentication protocol” should be and do, rather than what it actually does (and how). Therefore, the answer will have to include lots of context-setting and myth-debunking; in fact, the entirety of the answer is context setting, as once the asker knows how OAuth 2.0 really works the question becomes a non-sequitur.

As I am currently sitting on a long flight (I won’t tell you from where, or you’ll hate me :-)) with a batteryful of a laptop, this seems the ideal time to work through that. Be warned, though: this post is a bit philosophical in nature, no coding instructions or walkthroughs here. You must be in the right mood to read it, just like I had to wait to be in the right mood to write it. Also, as everything you read here, this is purely my opinion and does not necessarily reflect the position of my employer or of my esteemed colleagues. Finally: please don’t get the wrong impression from this post. I love OAuth 2.0, I am super-glad it is gaining ground and I like to believe we are contributing to spreading it further with our offering. I just want to save you wasting cycles on expecting it to deliver on something that it doesn’t do.

|

Short note: if you are not in the mood of reading a long & winding post, here there’s a spoiler. OAuth 2.0 is not a sign-in protocol. Sign-in can be implemented by augmenting OAuth, and people routinely do so; however, unless they’re using the OpenID Connect profile of OAuth 2.0 – see the end of this post, no two providers are alike and that forces library implementers to cover them by enumeration, supplying modules for every provider, rather than by providing a generic protocol implementation as it is standard practice for OpenID, WS-Federation, SAML and the like. If you want a good example of that, take a look at the modules list of everyauth. The rest of the post substantiates this statement, going in greater details. |

Some Confusion Is Normal

Even if you don’t drop the words “chamfered” or “skeumorphism” very often in your conversations, chances are that you were exposed in some measure to the renewed interest in Design. You might even have gone as far as reading “The design of everyday things”, a beautiful classic from Don Norman that I cannot recommend enough, no matter what your discipline is. When I read that book, quite a few years back, I learned about a concept that I believe helps describing what’s going on with our question. I am talking about the concept of affordance. More specifically, perceived affordance. In a nutshell, the affordance of an object is the set of things/actions that can be done with it: a door affords being opened (by pushing or pulling), a chair to be sat upon, a hammer to be handled. The perceived affordance is the set of visual aspects in an object that give hints on how it can be operated: a door handle invites grabbing and (depending on the shape) turning or pushing, a chair offers a slat and rigid surface at the right height, a hammer has a handle that invites brandishing.

As soon as you recognize that something is a door, no matter how weirdly shaped or placed, you will instantly know what to expect from it: you can open and close it, you can use it for moving between adjacent environments, you might need to unlock it, and so on. That holds even if the specific instance does not offer the specific perceived affordances necessary for a given operation, as you can generalize it from other instances of the door class you encountered in the past.

How’s all this even remotely relevant to the issue at hand? Getting there…

Although they have no physical reality or appearance to offer, authentication protocols are tools from the architect’s and the developer’s conceptual toolbox. As such, they have a number of common uses that the developer and the architect will come to expect from every instance of the “authentication protocol” class. Namely, one common affordance of authentication protocols is “authenticate users with provider A to access a resource on another provider B”. That works for a long list of protocols: SAML, WS-Federation, OpenID 2.0, OpenID Connect, WS-Trust, even Kerberos.

It is that affordance that allows us platform providers to create development libraries that secure your resources without knowing in advance who the identity provider will be, or services that allow you to dynamically plug new identity providers without knowing anything but the protocol they support and he coordinates that the given protocol mandates.

So, what’s the problem with applying the above to OAuth 2.0? Well, here there’s the kicker:

OAuth 2.0 is not an authentication protocol.

I can almost hear you protest! We’ll get to the technical details in a moment, but just want to acknowledge that I understand the reaction. The “OAuth 2.0 is a sign in protocol” narrative had innumerable boosters in the public literature: “Facebook uses OAuth 2.0 for signing you in!” and “In order to sign in our Web site via Twitter, go through their OAuth consent page” and many others. In fact, using OAuth 2.0 as a building block for implementing a sign in flow is not only perfectly possible, but quite handy too: a LOT of Web applications take advantage of that, and it works great. But that does NOT mean that OAuth2 *is* an authentication protocol, with all the affordances you’ve come to expect from one, as much as using chocolate to make fudge does not make (chocolate == fudge) true.

[Unless they are using OpenID Connect] Every provider chooses how to layer the sign-in function on top of OAuth 2.0, and the various implementations do not interoperate: both because of sheer chance (two developers implementing a class for the same concept will not produce the same type, even if they use the same language) and because almost always that’s not one explicit goal of those solutions. Usually providers want to offer access for their users to their own resources; the only external factor is that they want to do so even for applications developed by third parties. That does entail crossing a boundary, which is the staple of authentication protocols; but it happens to be a different boundary than the one you’d normally traverse when implementing sing-in. More details below.

Crossing Boundaries

Let’s decompose a classic App-RP-IP authentication flow, then a canonical OAuth 2.0 flow. We’ll see that the two approaches are designed to cross different chasms: that has consequences that become evident when we try to apply one approach to the problem that the other approach was designed to solve.

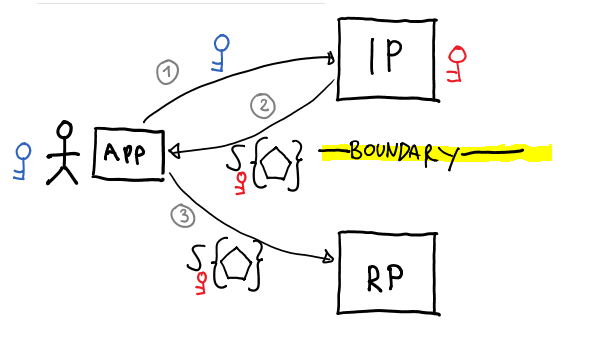

Classic App-IP-RP Authentication Protocol Flow

In a classic authentication protocol, a resource outsources authentication to an external authority. The resource can be called relying party (RP), service provider and similar; the identity provider can be called IdP, OpenID Provider, and so on, depending on your protocol of choice; but the conceptual roles remain the same. The RP and the IP can be run by completely different business entities, and in fact most protocols assume that that is the case. The boundary to be crossed is the one between the identity provider and the resource. That entails establishing messages for invoking the provider asking for an authentication operation and messages/formats for flowing back to the resource the outcome of the authentication operation. The outcome must be presented in a way that admits verification from the resource. Every other detail about the implementation of identity provider and resource can be ignored, as adherence to the protocol as described is all that’s needed to carry an authentication operation.

The figure above shows a classic flow for nondescript sign-in protocol; the app can be a browser or a rich client app; the IP can be implemented with an STS or whatever other construct that can authenticate users and spit out tokens; and the token is represented as the usual pentagon carrying the signature of its issuer. I am not going to walk you though that flow here, you’ll find countless similar diagrams explained in details in the last 9 years of posts.

Different authentication protocols have different strictness levels on how the authentication results should be represented: the SAML protocol will only use SAML tokens, WS-Federation admits arbitrary token types (though in practice it almost always uses SAML tokens as well), but in general the idea is that the format is well-known to the resource, which can validate its source and parse it for meaningful info (e.g. user claims). This is NOT optional: the resource knows nothing about the provider apart from the protocol it uses and associated coordinates, hence agreement on the token format is essential.

Another interesting thing to note is that the application used by user or accessing the resource plays absolutely no part in the authentication flow. In most protocols the identity provider will not care about what app the user is leveraging for performing authentication, but only about the credentials (hence the identity) of the user; and the resource won’t care about that either, only validating that the token comes from the right issuer, has not be tampered with, contains the required user info, and so on. The next example is venturing a bit in the inter-reign between authentication and authorization, but I think it captures an important intuition about the general point hence I’ll go ahead anyway. Say that Judy is trying to open a Word document from a SharePoint library: her ability of doing so will depend on the permissions granted to her account and the restrictions assigned to the document, the fact that she is using IE or Firefox will play no part in the authorization decision. The same can be said for all of the rich clients using traditional authentication protocols to call web services.

Canonical OAuth 2.0 Flow

The OAuth 2.0 protocol is aimed at authorizing rather than authenticating. There’s more: its aim is to authorize applications, an artifact that was not playing an explicit role in the flow described in the earlier section.

Applications are the main actor here: the user is involved at the moment of granting his/her permission to the app to access the resource on his behalf, but after that the user might disappear from the picture and the app might keep to access the resource, unattended.

I am sure you already know the canonical story for explaining the problem that OAuth 2.0 was designed to solve:

- A user keeps his/her pictures at Web application A

- The user wants to use Web application B to print those pictures

OAuth 2.0 provides a way for the user to authorize the Web application B to access his/her pictures on A, without having to share his/her A credentials with B. The importance of that accomplishment cannot be overstated: with the explosion of APIs which heralded the rise of the programmable Web, the password relinquishing anti-pattern was going to be completely unsustainable. OAuth is one of the key elements that is fueling the current API wave, and that is a Good Thing.

Given that the regular reader of this blog might be more familiar with the federation protocols than with OAuth 2.0, I’ll fix a quick walkthrough for one of the most common flows (OAuth 2.0 supports many). Note that not all the legs I’ll describe are part of the framework in the spec: here my purpose is to help you to understand the scenario end to end, and to that purpose I’ll have to add a bit of color and throw some simplifying assumptions here and there. If OAuth2 normative reference is what you seek, please refer to the actual specification! (in fact, there are two of those you want to look at: RFC 6749 and RFC 6750).

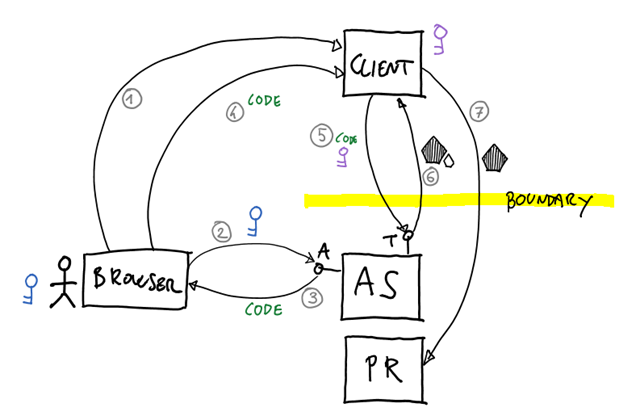

I will introduce the OAuth 2.0 canonical roles during the walkthrough, with the hope that seeing them in action right away will make them easier to grok their function. Here is what happened in the figure above:

- Say that Marla navigated to a Web site that offers picture print services. We will call that Web site “Client”, for reasons that will become evident momentarily.

Marla wants to print pictures she uploaded to another Web site, let’s call it A again. The Client happens to offer the possibility of sourcing pictures from A: there is a big button on the page that says “Print pictures from Web site A”, and she pushes it. - The client redirects Marla’s browser to an Authorization Server (AS for short). The AS is an intermediary, an entity that is capable of

- authenticating Marla to A,

- asking her if she consents to the Client app accessing her pictures (up to and including what the Client can do with those: read them? modify them? etc) and

- issuing a token for the client that can be used to carry the actions Marla consented to.

The redirect message carries the ID of the Client, which must be known beforehand by the AS; what the Client intends to do with the resource; and some other stuff required to make the flow function (e.g. a return URL to return results back to the client).

The AS, and specifically its Authorization Endpoint (it has more than one), takes care of rendering all the necessary UI for authenticating Marla, assist her in the decision to grant or deny access to resources, and so on. - Assuming that Marla give her consent, the AS generates a Code (think of it as a nonspecific string) and sends it back to Marla’s browser with a redirect command toward the return URL specified by the Client

- The browser honors the redirect and passes the Code to the Client

- The Client engages with another endpoint on AS, the Token Endpoint. Note that from now on all communications will be server to server, Marla might close the browser, shut down her computer and go for a coffee and this part of the flow will still take place.

The Client sends a message to the token endpoint containing the just-obtained Code, which proves that Marla consented to the actions that the Client wants to do. Furthermore, the message contains the Client’s own credentials: the same Client ID sent in 2, and some secret (the Client Secret) that the AS can use to verify the identity of the Client and use it in the authorization process. For example; say that Web site A is offering its API under some throttling agreement, and that the Client already exceeded its quota of daily tokens: in that case, even if Marla consented to granting Client access to her pictures, the Client won’t get the token that would be necessary to do so.

In this case let’s assume that everything is OK; the AS issues to the client an Access Token, which can be used to secure calls to the A API which offer access to Marla’s pictures. I can’t believe I made it this far without telling you that in the OAuth 2.0 spec parlance Marla’s pictures are a Protected Resource (PR), and that the A Web Site is the Resource Server (RS).

In the same leg the AS can also issue a Refresh Token, which is one of the most interesting features of OAuth 2.0 but can be safely ignored for today’s discussion. - Client can finally access the PR. OAuth 2.0 defines how to use the Access Token in the context of HTTP calls, and Client will have to stick with that; but if it does so, it will be able to access Marla’s pictures programmatically and incorporate them within its own user experience & logic. The miracle of the programmable Web renews itself.

Wow, that took much longer than I expected; and I left out a criminally high number of details! Hopefully this gave you an idea of how one of the most common OAuth2 flows works. Let’s see if we can work with that to extract some insights.

The first observation is obvious. I guess that’s pretty clear that this flow does NOT represent a sign-in operation.

Actually, a sign-in might take place: in #2 Marla had to authenticate (or have a valid session) with A in order to prove she is the Resource Owner hence competent to grant or deny access to it. However OAuth 2.0 does not specify how the authentication operation should take place, or even which outcome it should have: OAuth is interested in what takes place AFTER authentication, that is to say consent granting and consequent Code issuing. If we want to use OAuth as sign-in protocol, the sign-in that takes place in #2 does not help.

The second is a tad more subtle. If somebody felt the need to regulate how this flow should take place, it is reasonable to assume that there is a boundary to cross: and the boundary to be crossed is the one that separates the Client from the AS+RS combination. True, the letter of the specification does not position this boundary as the obvious and only one: AS and RS can be separated entities as well, owned and ran by different businesses. In practice, however, the specification does describe all communications between the Client and AS+RS, though it does not give details on AS-RS exchanges. This means that if your solution calls for distinct & separate AS and RS, you’ll have to fill the blanks on your own: which in turn means that how you will fill those blanks will be almost certainly different from how others in the industry will solve the same problem.

Too abstract for your tastes? Here there’s some *circumstantial* evidence that keeping AS and RS under the same roof is baked in OAuth’s common usage, if not the spec itself.

- The AS must know how to authenticate users who keep resources at the RS

- The AS must know the resources (and their affiliation with respective owners) kept at the RS well enough to render relevant UI for the resource owner to express preferences (which resources? what actions can be performed on them?)

- The RS must be able to validate tokens issued by the AS and understand their authorization directives well enough to enforce them, yet the OAuth 2.0 spec does not mandate specific token formats, callbacks from the RS to the AS for validation, or any other mechanism that would regulate RS-AS communications, offline or online

- In almost all of the OAuth 2.0 solutions found in the wild the AS and the RS positively, consistently live under the same roof. Think of Facebook and Twitter.

You know, it even makes complete business sense. If you have a Web site and you want to offer an API for other Web sites, those other Web sites are the entities you want to enter in a relationship with; those are the ones that you want to charge, throttle, block when something goes wrong, and so on. Again, you just need to look at the market and what happens when an API provider changes its policies to understand what are the parties entering in a contract here, and what is the boundary that needs regulation in this scenario.

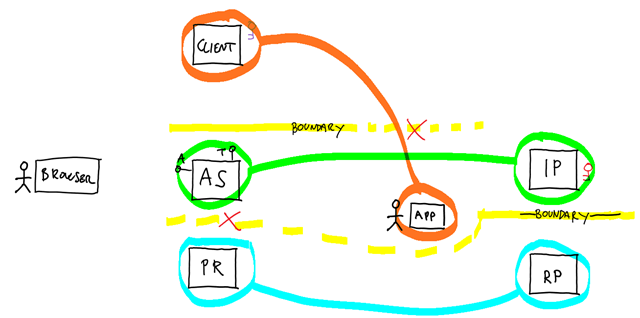

The Trivial Mapping: PR as RP, AS as IP, Client as “App”

Still with me? Excellent. Let’s get back to the original problem; why can’t I just bake into ACS (or a library) the use of OAuth 2.0 as a sign-in protocol so that it will work with all the “OAuth2 providers” out there without custom code, just like it does for OpenID or WS-Federation providers?

To learn more about the problem, let’s simply try to use OAuth 2.0 to perform a sign-in flow. What I observed is that people tend to map OAuth2 roles to sign-in protocol roles, according to correspondences that make just too much intuitive sense to ignore. You can see such mapping in the diagram above, where I pasted the two flows (sans individual steps, for the sake of readability) and highlighted the correspondences. Let’s spend few words about those; just remember, intuition can be very treacherous ;-).

- The protected resource/resource server is the entity we seek access to, hence it must be the relying party counterpart: right? The even almost have the same acronym!

- The authorization server authenticates users and issues tokens; and anything that issues tokens can be thought as an STS, isn’t it? And has is an STS, if not the arm of the IP? It’s settled, then: AS == IP.

- The client app is what requests and uses the token; it is also what the users operates in order to perform the desired function: that seems to be a pretty natural fit for the app/client/user agent role in a sign-in protocol. True, it is a bit troubling the fact that in OAuth2 the Client is a role that is much more in focus (and with lots more rules to obey) than the app/client/user agent in sing-in protocols is; OTOH we ran out of entities to map, hence our hands are kind of tied here. Like some nasty element in an equation we are trying to simplify, we can always hope it will cancel out as we move forward.

Does that mapping make sense to you? It usually does; also, it can be made to work and can be quite useful. However, in order to apply this approach you need to handle a couple of thorny issues; and in the process you’ll *have* to occasionally go beyond what the specification mandates, introducing elements that make your solution (and everybody else’s) potentially unique hence non-interoperable out of the box.

Say what you will about service orientation, but it is a very powerful way of thinking about distributed systems. Just glancing at the diagram above you should detect a capital sin occurring not once, but twice: the proposed mapping violates boundaries.

- In a classic sign-in scenario, there is a boundary that separates IP and RP. Per our mapping, that induces a boundary between AS and RS/PR: but OAuth2 describes no such boundary!

In our discussion above we have seen how OAuth 2.0 does not really regulate communications between RS/PR and AS. Whereas in a sign-in protocol – say WS-Federation – the RP knows how to generate a sign-in request for the IP and knows how to validate incoming tokens, in OAuth 2.0 you’ll find a big blank there; co-located AS and PR do not have issues with this, but here you *have* to fill the blank. You’ll have to pick a token format to accept, and you’ll need to establish how to tell if a token is valid: that might entail deciding what are the signing credentials used by the AS, how they are used and getting a hold on the necessary bits; or some other method to validate incoming tokens. Ah, and let’s not forget about extracting claims! They might not be strictly necessary for the sign-in in itself, but experience shows that usually you want some user info contextually to the sign-in operation.

What format will you choose? Whatever you’ll pick, chances are that others will pick something different. Furthermore, people have no way of knowing (programmatically, as in via metadata) what you have chosen.

It gets worse: if you own the RP and you want to work with an existing “OAuth 2.0 provider” (hopefully the expression is changing its meaning for you as we dig deeper in what it actually entails) chances are that its AS and RS/PR are co-located, per the above. In that case its access tokens might be opaque strings in a format you have no hope to crack from your own RP. To make an extreme example: if an AS issues an access token that is simply the primary key of a table in a DB, only a co-located RS/PR which can access the same DB will be able to consume the token; but if you throw a boundary in the picture, as it would happen for a RP (==RS/PR in our mapping) ran by a third party, direct token validation is no longer possible (but don’t completely forget the scenario, as we’ll revisit it in the next section). - In a traditional sign-in scenario, the client/app/user agent used to access the resource is simply not a factor in deciding whether the user should be signed in or not. However in OAuth 2.0 the Client, to which our lower-case client/app/user agent is mapped in this approach, is not only a factor: the Client has its own identity, and that identity is an important element in the AS’ decisions. There is a boundary between the Client and the AS+PR, and as we saw this has important consequences that induce ripples in the mechanics of how Client-AS communications are implemented. Even ignoring the matter of the Client secret, which is not mandatory in all flows: most existing “OAuth 2.0 providers” in existence will expect to know beforehand the identity of the Client, and that means that your transparent sign-in clients will suddenly need to acquire some measure of corporeity. You’ll have to assign Client IDs and use them when requesting codes & tokens; what’s more, you’ll have to provision those, often by registering your client with the AS. Funny story, tho: very often your existing sign-in client apps will not have an obvious business reason for having their own identity, which means that you’ll have to work something out for sheer implementation reasons. That can be pretty onerous: imagine a rich client app that, as long as the user has valid credentials, with traditional sign-in protocols would do its job no matter how old the bits are or from where they are ran. The same app with an ID would now need that ID provisioned with the AS, distributed to (or with) the rich client app, have that ID maintained when it expires or gets blocked for some reasons, and so on. Remember the example we mentioned earlier in which Marla could be denied access because the Client itself exceeded its daily amount of tokens it can be issued? That’s one example of things that might happen when the client app is not transparent. Also: I subtly shifted the conversation to rich client apps, but imagine what you would do when the client is a browser.

Most of the issues here can be worked around if you own all of the elements in the scenario: for example, you might decide to admit anonymous clients or have special IDs that you reuse across the board (not commenting on whether that would be a good idea or not, that’s for another day). That said: you can see how all this might be a problem if you’d want to be able to use “OAuth 2.0 providers” for sign-in flows out of the box. In all likelihood, existing providers would want to know the identity of your client app, but more often than not your client would not have an identity of its own, adding it might not be a walk in the park and the way in which you’d provision it varies wildly between providers (think of the differences in app provisioning flows between Facebook, Twitter and Live) and it often entails manual steps through web UIs.

Lots of words there! You can’t say you weren’t warned 🙂 Let me summarize.

Treating AS as an IP, RS/PR as RP and clients as Clients does not enable you to take advantage of OAuth 2.0 providers for sign-in scenarios without requiring you to write custom code. That mapping violates various boundaries, which in turn requires you to fill blanks in the specs (like which token format will be used for access tokens) or find out how every provider decided to fill those blanks. Furthermore, there might be providers that simply do not admit this kind of mapping (think opaque tokens that cannot be validated without sharing memory with the AS).

That does not mean that this approach is not viable, only that it is not generally applicable if all you know is that you want to “talk to an OAuth 2.0 provider”.

Yes, intuition can be misleading, but it is really the case to say that it’s all in our head. You really cannot blame a PR for not being an RP, or an AS for not being a perfect match for an IP. That’s not what they were designed for, and you can’t blame a screw for not being a nail just because they both have a head and you are used to going around with a hammer.

But let’s not give up yet! Maybe the problem will yield, if we attack it from a different angle.

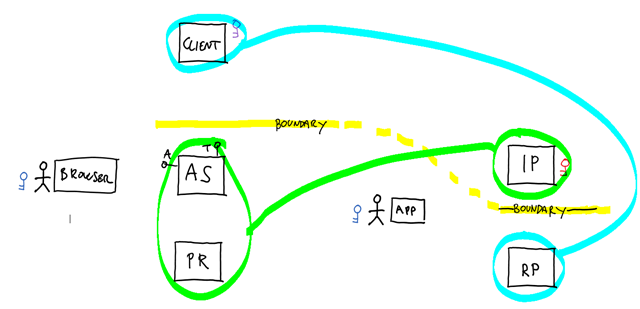

Alternative Mapping: Client as RP, AS+PR as IP

Let’s shuffle the cards a little. The issue with the former mapping was that we focused on the individual nodes of the graphs, instead of trying to preserve their topologies (and with it the boundary constraints).

Here there’s an idea: what if we’d use the Client to model an RP, and the AS+PR/RS to play the role of the IP? Counterintuitive, true, but think about it for a moment:

- The boundary crossing constraints would be preserved

- Instead of “traditional” token validation, a RP could consider a user signed in if it can obtain an access token and successfully access a PR/RS on the user’s behalf

- Keeping PR/RS and AS together saves us from having to define how they communicate with each other; furthermore, that assumption happens to be a good fit for most of the existing providers

- Usually RPs need to be provisioned by the IP before tokens for them can be requested. A RP=>Client mapping is compatible with both the need to assign to the Client an identity and the need to provision it with the AS

MUCH better, right? This does sound much more viable, and it is. LOTS of sign-in solutions layered on top of OAuth 2.0 operate on this principle.

However, it still does not make possible to create a generic library or a service that would allow to implement sign-in with any “OAuth2 provider” without provider-specific code. That is actually pretty easy to explain. Although you might achieve some level of generality for obtaining the access token (task made difficult by the plethora of sub-options/custom parameters/profile differences that characterize the various providers) you’d still have to deal with the fact that every provider offers different protected resources and different APIs to access them. There would be no generic call you could bake in your hypothetical library to prove that your access token is valid, nor fixed user properties you could use to reliably obtain what you need to know about your incoming users. And what holds for a library, holds for products and services that would be built with it.

Wrap-Up, and the Right Way to Go About It: OpenID Connect

Between the description of the trivial mapping and the Client=>RP one, I hope I managed to answer the question I opened the post with.

Q: “Why can’t I provision in ACS OAuth 2.0 providers in the same way as I provision OpenID providers?”

A: “Because OpenID is a sign-in protocol, and OAuth 2.0 is an authorization framework. OAuth 2.0 cannot be used to implement a sign-in flow without adding provider-specific knowledge. Also, there’s a long blog post with the details.”

This would be a good time to remind you that as usual, THIS ENTIRE POST is all my own personal opinion. Please take it with a huge grain of NaCL.

Also, I ended up writing this post thru THREE intercontinental flights: not very relevant to the topic at hand, but wanted to dispel the notion that I am very prolific 🙂

That settled, the fact that the Client=>RP does not solve the issue really leaves with a bitter aftertaste. We were so close!

As it turns out, dear reader, we are not the only ones feeling that way.

Have you ever heard of OpenID Connect? OpenID Connect is the next version of OpenID. It is layered on top of OAuth 2.0, and is very much a sign-in protocol.

One way to think about it is that OpenID Connect formalizes the Client=>RP approach, by providing the details of how to express user info as protected resource, how to redeem access tokens and even which token format should be used (the JSON Web Token: what else? :-)).

OpenID Connect is still a draft specification, but it is enjoying a lot of mindshare and is rapidly spreading through the industry: it will help providers to build on top of their existing investment on OAuth 2.0, and consumers to take advantage of their services for sign-in flows. Yes, even with generic libraries 🙂

One Comment